What are ETL processes

The origin of the data can be very diverse and it can be found in different formats. For this reason, it is often necessary to standardize the raw information received for better organization within the data warehouse.

What is ETL: functions and phases

The ETL process basically consists of moving data between different sources and applying transformations along the way if necessary.

In many cases it is necessary to migrate information from one database to another or collect information that comes from external servers and store them in a clean, organized manner in a data warehouse, also known as a data warehouse.

We are going to analyze each of the phases of an ETL process:

1. Extraction

This part consists of collecting raw data from different sources of information. Normally the source is flat files or relational databases (SQL).

However, connections can be made to non-relational databases (NoSQL) or information that comes from real-time systems.

In the extraction part, it is also verified that the information received by the sender has the correct format and the necessary structure so that the following ETL steps can take place.

2.Transformation

The transformation part consists of applying certain modifications to the data received from the extraction phase according to a set of business rules.

Some of these transformations consist of calculating new values from existing ones, selecting only certain columns, encoding categorical values, rotating columns and rows through pivoting, etc.

Once the data already complies with the predefined rules, it is time to move on to the next phase: loading.

3.Load

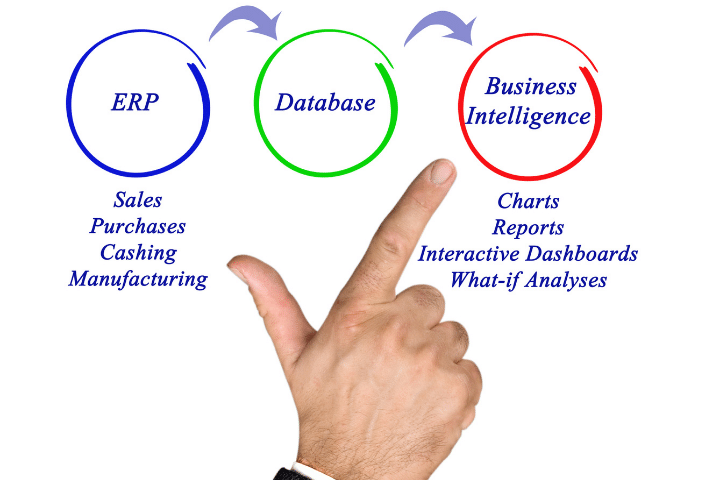

The last phase consists of loading the clean data with the correct structure into the destination system. Normally this data dump is done in a data warehouse. There they are stored safely and can be analyzed and visualized later with BI tools such as Power BI or Tableau.

ELT processes: data lakes

ELT stands for Extract, Load and Transform and is a method that differs from ETL processes in that the transformation of data takes place after loading into the target system. That is, the system that stores the information must be capable of applying the necessary transformations.

In this case, the data storage format consists of a data lake. A data lake is a raw data store which does not have any predefined structure.

We could say that the data is stored in an orderly manner, unlike data warehouses (typical of ETL processes) where the information is stored in an orderly manner following a certain scheme.

The ELT process is typically used in non-relational or NoSQL databases.

Differences between ETL and ELT

There is no better or worse method. Each process is optimal for certain functionalities.

The Big Data engineer must be able to design a good pipeline according to the needs required in each application.

The main difference between ETL and ELT is when the data is processed, cleaned and selected. In the case of ETL it happens before being saved at the destination, so there is some information that is lost during the process.

Instead, ELT, the data is transformed directly at the destination, thus preserving the initial data.

Typically, the storage infrastructure in which an ETL process is used is known as a data warehouse. In the case of ELT processes, this infrastructure is called a data lake. Data lakes do not have any schema, they are raw data and are not ordered.

In contrast, data warehouses are data structures ordered through a predetermined schema.

Using one or the other depends on the objective of the project. For example, if ingestion speed is very important then we will opt for an ELT process since we will not waste time transforming the data before saving it to the destination system.